We design our tools which design our jobs which design us. Or: why Excel sucks

Habituation helps us get down to work, but reflection engenders efficacy. How do we drive the latter?

You’re reading DisAssemble, a biweekly philosophy of tech newsletter aimed at those interested in creating better digital products.

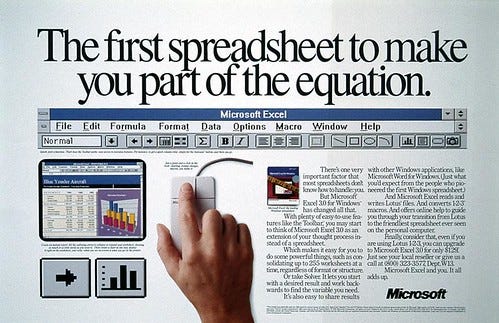

I am repelled by Microsoft products.

There's a stickiness to them, with their ancient legacy features.

Chunky toolbars get in your way. Invisible rules seem to make text disappear or change style. Everything feels so unashamedly enterprise, so 90’s, so depressingly dry and staid.

It's as if they’re designed to tug you back into the past, like gum on the bottom of your shoe, pulling on your foot as you try to step forward.

Of Microsoft’s products I like Excel the least, though I could never quite articulate the reasons for my distaste.

But last year I read computer scientist Paul Dourish's book The Stuff of Bits, which finally provided me with some clarity. Dourish discusses in great detail how spreadsheet software - Excel in particular - shapes thought and action.

He explains how Excel, and other spreadsheet programs, were intended to model the financial state of a company. However, over time, these tools came to be used far beyond their remit.

Spreadsheets are now used for everything - planning, logging, tracking, organising, investigating, comparing - in almost any industrial and public sector imaginable. Projects, programs and plans seemingly can’t exist without at least a handful Excel docs attached to them.

The consequences of this being the go-to tool for all things work are enormous.

For one, spreadsheets require a determined granularity. In other words, they require data to be at a specific level of detail. An Excel doc, for example, might track software bugs, but drilling down into bug subtypes can't really be done. Sure you can add another subtype column, but the standard unit is still 'bug'.

Excel also 'anticipates'. It ‘starts’ in the top left corner and directs the eye right or down. This grid necessitates an organising structure. This helps to dictate hierarchy, and priority, even if such conceptual categories were never intended by the creator of the spreadsheet.

Excel also doesn't allow for fuzzy categories. Items are either part of category or they are not. Overlap of a unit is not allowed.

All of these qualities perhaps reflect the scientific management ethos that came along with high modernism. In it, there existed a mania to break the world down into discrete quantitative units for surveillance and control. This perhaps speaks to my distaste toward Excel (even I however find it suitable for some activities - just not all).

As Jenny L. Davis taught us in my last newsletter, digital objects can afford different things to different people. Spreadsheets encourage linearity of thought and demand single levels of granularity, and discrete categories. They discourage non-linear, fuzzy thinking and refuse multi-level representation - but only to people who may think like this; those who aren’t of such persuasion may never experience these disinhibiting affordances.

It’s not difficult to imagine that people who think in more fuzzy, creative, non-linear ways are repelled by Excel, yet are often required to use it. They are implicitly encouraged to abandon these ways of thinking in work environments, and instead inculcated to think in more parameterised ways.

Annie-Marie Willis, a professor design theory, discusses “ontological designing”, which helps illustrate how this change occurs. This idea suggests that, among other things, we shape the things that we make and, in turn, are shaped by the things we make and the processes that make them.

She notes how we experience this shaping, not as something consciously self-examined, but as an embodied experience. She discusses her anxiety about all the work she has to do to clean up her garden:

"This is not the same as imagining, as in setting forth before my consciousness as an object to contemplate; rather it is a thinking as a being with, an embodied sensing/thinking which does not require actual physical presence, only memory of it, it is a thinking which prefigures doing and is a designing of the task and of my time".

The act of doing is, in a way, done before it is done, because we pre-experience it in an embodied way. When we consider doing something, we generally don’t consider it outside of its situated experience, we simply remember doing it as it has been previously done given the affordance background that we have bodily experienced.

This background of affordances fades from view because it is not an object “set forth before... consciousness as an object to contemplate”. As I’ve noted previously, this is what Heidegger calls dasein, the embodied part of the world that is much of us as we are of it. Dasein is the background to which we must “cope”. The more seamless our designed life and society it becomes, the more we unconsciously we are designed towards it.

It's only when “breakdown” occurs that we stop coping. That is, when things very obviously stop working we are forced to reflect on the character and meaning of our tools, the affordances around us.

We can get further conceptual clarity on this by understanding a couple of Heideggerrian terms: “ready-at-hand” and “present-at-hand”. Ready-at-hand is when a tool is invisible to the person using it. A hammer is not the object of attention to a person hammering, the nail is - or more specifically, the goal within the network of relations that brings about the need to hammer that nail. If a hammer were to break (“breakdown”), it then becomes the object of focus - present-at-hand. It no longer acts as a tool because it no longer fits seamlessly into this network of relations. It needs to be fixed or replaced.

As an example, you might point to the beginning of the software development process “Agile” as coming about from a “breakdown” - a move from ready-at-hand to present-at-hand.

This occurred in the 80’s and 90’s, when software was driven by linear, waterfall projects that began with enormous lists of technical and functional requirements.

“People would come up with detailed lists of what tasks should be done, in what order, who should do them, [and] what the deliverables should be. The variation between one software and another project is so large that you can’t really plot things out in advance like that.”

This quote is from Martin Fowler, one of the founders of Agile.

Software developers’ work practices had been situated like a factory line, their embodied experience was one where they were resources rather that actors and enactors. “It made it so people were viewed as resources rather than valuable participants,” Fowler said.

To think of it in terms of ontological design, the way that people were “designed to think” by the affordance structures of the system in place wasn’t producing results, or treating people the way they needed to be treated.

Thus a breakdown occurred.

At a 2001 gathering in Snowbird, Utah he and 16 other “organisational anarchists” came up with the Agile Manifesto, which sought to establish collaboration, change, working software and individual interactions as principles for software development.

Since the manifesto, the vast majority of software development has followed Agile principles in one way or another. By default, software development has become iterative, with a focus on communication and constant testing, rather than a front loading of detailed requirements.

In essence, the existing ways of working were so obviously and so badly broken, they couldn’t help be the focus of attention. Practitioners had to design the affordance layer that would give them what they need to work properly, and in doing so both reflected and shaped how people came to develop software.

A new network of affordances, and relations between them came about. And it became extremely successful. Agile is now wielded as a tool to solve all ills, similar to the spreadsheet. It’s become the habituated to background, dasein, and as such is used in industries far beyond software development.

Of course, Agile is not prescriptive in terms of material affordance. At its core, it is only a series of principles. However, this is rarely the case in practice, where material tools and activities are almost always associated with Agile: Jira, Sprints, sprint ceremonies, and so on.

But this is how the the task, the material, and people interact. People become accustomed to certain affordances, the embodied, situated experience of doing an activity, as Anne-Marie Willis did with her garden.

Importantly, this habituation starts to define the ends of activity well. A common retort to my dislike of Excel may be that Excel is used because it fits into how projects are managed. But that implies a uni-directional causality: projects are also structured to fit into Excel. The affordances, ways of thinking and even goals feed and shape one another in ways that ever increasingly hide other ways of being , until a severe breakdown occurs.

But waiting for a breakdown is obviously not enough. People labour under imperfect tools and practices but they still manage to get a job done, however imperfectly. Or they don’t even realise who or what they are excluding by using certain tools and practices. Breakdown only occurs when desired ends stop occurring, and not before.

The challenge then is removing constraints that disinhibit the kind of reflection that questions the nature of the job and the activities needed to accomplish it.

This is where I believe freeform whiteboards have their strength (digital or otherwise). They allow planning and thought to occur nearly any way that can be rendered on a two dimensional screen. You can create kanbans, venn diagrams, concept models, user journeys - even grids, should you want them.

At the risk of this becoming a sponsored post, I would suggest that tools like Miro embody a form that requires reflection for the best affordance structure to plan a project, event, or what have you. A wide open tool refuses little, and encourages consideration of technique and tool. In this way the kind of embodied, pre-figured thought of material activity isn’t toward a single technique, it’s about technique.

The above is an example of such a project planning/prioritisation/understanding artifact my team and I created at work. It’s a representation of a mixture of commentary from other people, notes, prioritisation, user flows and screenshots. It wasn’t planned, it emerged relatively naturally from the questions, needs, and people involved.

In UX, we often talk about ‘enabling constraints’, that is, constraints that give rise to creativity. But I would suggest that in considering how to do something, you need to go up a level and consider what the constraining structures need to be, rather than simply going with what you did last time. In essence, we are designing ourselves for reflection. Dasein should move from ready-at-hand to a presence-at-hand as a matter of course.

To put it more simply: the goal is for the question to shift from “what is the correct job for the tool” to “what is the correct tool for the job”. Ways of working need to be reflected on. Not given.

Thanks for reading - see you in a couple of weeks.

Header image from Microsoft Sweden